Some months ago, I became one of the many victims of a dreaded massive layoff. Due to personal issues — among them caring for my father after he fell ill with COVID — I’m still searching for a job.

In this search, I’ve encountered a trend that’s relatively new to me: the rise of coding tests. I studied data structures and algorithms during my degree, but that was more years ago than I’m comfortable admitting. Years of just storing data in databases and retrieving it to display on a screen haven’t exactly kept those skills sharp.

In my last set of interviews, I failed more often than I’d like to admit. Thankfully, I came across a kind team lead (yes, they exist!) who gave me valuable feedback on my performance. With his guidance, I’ve developed a plan to succeed in future interviews. But that’s a topic for another post.

The problem: Find any local maxima#

One of the coding problems I encountered was to find a local maxima in an array of integers. A local maxima is a value greater than its neighbours, considering only one neighbour for the first and last elements. The input array had an additional constraint: you wouldn’t find any repeated values.

The key insight needed to properly solve this problem (which I failed to find without a bit of help due to a severe case of rustiness in some parts of my brain) is this: if you select any value which isn’t a local maxima, you are guaranteed to find one on the side of a neighbour that’s greater than the current value.

Here’s an example:

let values = [5, 15, 1, 6, 8, 9, 7]If we pick the middle position (6), this isn’t a local maxima, since 6 < 8 (right neighbour). This 8 becomes a local maxima candidate (it is already halfway there after all). So if we now take the right half of the array:

let rightSideValues = [8, 9, 7]we are guaranteed to have a local maxima. Why? 8 was already greater than its left neighbour, so we have two cases:

- either

8is greater than its right neighbour, then8is a local maxima - or

8is less than its right neighbour, in which case we can discard it and repeat the process, potentially finding the maxima at the end of this subarray

We have a typical divide and conquer approach which will give us O(log(n)) complexity. With this insight in mind implementing this should be trivial… Or maybe not?

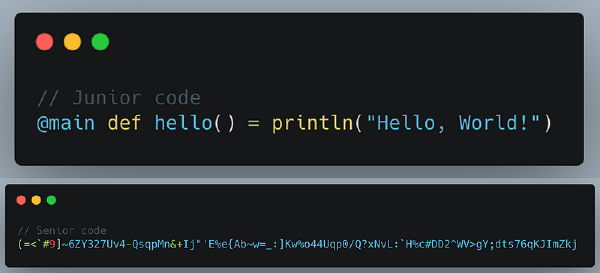

The solution: Swift vs Scala (or imperative vs functional)#

During the interview, I mistakenly used Swift, despite having spent months on the bench and, adding insult to injury, recently diving into Scala again. My brain works in a curious way and tends to create big pictures while forgetting details and mixing things up if I don’t refresh them continuously, so I leaned toward functional programming, leading to some interesting decisions that most likely confused my interviewer.

A few days after the interview, I revisited and solved the problem using Scala, but just out of curiosity I asked ChatGPT about the solution in Swift to compare it with my solution. Here’s the imperative Swift solution it generated:

func findAnyLocalMaxima(in numbers: [Int]) -> Int? {

guard numbers.count > 1 else { return numbers.first }

var left = 0

var right = numbers.count - 1

while left <= right {

let mid = left + (right - left) / 2

// Check if mid is a local maxima

if (mid == 0 || numbers[mid] > numbers[mid - 1]) &&

(mid == numbers.count - 1 || numbers[mid] > numbers[mid + 1]) {

return numbers[mid]

}

// If left neighbor is greater, search the left half

if mid > 0 && numbers[mid - 1] > numbers[mid] {

right = mid - 1

} else {

// Otherwise, search the right half

left = mid + 1

}

}

return nil

}What was my solution in Scala like? Let’s take a look at the functional alternative:

import scala.annotation.tailrec

import scala.collection.IndexedSeqView

def findAnyLocalMaxima(numbers: Array[Int]): Option[Int] =

extension (numbers: IndexedSeqView[Int])

def hasLocalMaximaAt(index: Int) =

numbers(index) > numbers(index - 1) && numbers(index) > numbers(index + 1)

def leftHalf = numbers.slice(0, numbers.length / 2)

def rightHalf = numbers.slice(numbers.length / 2 + 1, numbers.length)

@tailrec

def findAnyLocalMaximaInView(numbers: IndexedSeqView[Int]): Option[Int] =

numbers.length match

case length if length > 2 =>

val middleIndex = length / 2

if numbers.hasLocalMaximaAt(middleIndex) then

Some(numbers(middleIndex))

else if numbers(middleIndex - 1) > numbers(middleIndex) then

findAnyLocalMaximaInView(numbers.leftHalf)

else

findAnyLocalMaximaInView(numbers.rightHalf)

case 2 => Some(math.max(numbers(0), numbers(1)))

case 1 => Some(numbers(0))

case 0 => None

end findAnyLocalMaximaInView

findAnyLocalMaximaInView(numbers.view)

end findAnyLocalMaximaOk, I admit it, I got a bit fancy with that extension to make the main code even more readable.

Comparisons are odious, so let’s compare!#

Functional programming emphasises referential transparency — the ability to replace a function call with its result without changing the program’s behaviour, as long as you use pure functions. This aligns with recursive approaches, where you rewrite the problem into smaller cases to reach a base case. As a result, functional programming favours recursive calls instead of loops, because that way you express much better the intent of your code.

If you inspect the above functional code the algorithm’s intent is crystal clear:

- Check if the middle value is a local maxima

- If not, recurse into the appropriate half

That intention is totally lost in the imperative version, which relies on side effects to adjust indices to inspect the data you are interested in. You even require comments explaining the logic!

So what’s the problem of going fully functional in Swift?

Don’t look at my tail, I’m not optimising it#

In two words: stack overflows! I bet you will have faced at some point a stack overflow exception. This happens because whenever you invoke a new function you push a frame in the stack containing information needed for the runtime environment to run that function. So if you nest too many calls, the stack runs out of space and you get the dreaded stack overflow exception.

But wait a minute… Isn’t this a problem in Scala too? No! Why? Because Scala is a proper functional language in this aspect. This mechanism of recursive invocation instead of loops is so common in functional programming that all decent functional languages have what is called tail call optimisation (TCO), where instead of adding a new frame to the stack, the current frame is substituted if the last operation of a function is a call to itself: you simply won’t need the previous frame if you do nothing apart from recursively calling yourself.

See that @tailrec annotation before findAnyLocalMaximaInView? That is in fact signalling the Scala compiler to use this optimisation so you can confidently make potentially infinite recursive calls without incurring in a stack overflow exception.

But wait a minute, Swift DOES optimise tail calls#

Well, Swift sometimes optimises tail calls.

First of all, depending on the level of optimisation you set during the compilation phase, tail call optimisation may be left out completely causing a stack overflow in your debug build (when you use -Onone).

But even if you set the optimisation level to -Osize or -O as far as I know there is no guarantee that tail calls will be optimised: they aren’t mentioned at all in the Writing High-Performance Swift Code article of the Swift documentation, and I have only been able to find an ancient proposal in the Swift Forums regarding this (where they mention Scala’s @tailrec by the way) that was apparently closed without being implemented.

Summing up, unless I stand corrected (which I’d love), if you want to use one of the most common mechanisms of algorithm implementation in functional programming, this is tail recursion, you are at your own risk. Sometimes it will work, sometimes it won’t. But hey, maybe you are one of those who like to live on the edge. And we don’t have data structures requiring thousands or millions of frames in our stacks… Until we do.

Ok, but functional approaches are awful regarding space complexity#

Coding tests focus on both time and space complexity: does your algorithm use an awful amount of extra space to deliver its results? How does that extra space grow in relation to the input data? Is it a constant, linear, logarithmic or quadratic relationship? If the functional approach is awful regarding this, doesn’t that rule out this approach in favour of the imperative mutable approach?

When you think of functional programming you also usually think of immutability. You use to work with immutable data structures, transforming them in pipelines that in the end produce the desired results. All those transformations usually create intermediate data structures that are created and discarded on the fly, as steps leading you to the final goal.

In the case of recursive algorithms with a divide and conquer strategy you usually pass down chunks of the original input data until you reach some base case that is trivially solved, and then the solution is built up from the bottom. In the case of this problem we are passing down approximately half the input data until we reach the base cases. If we sum all these arrays that we create (?) we get that the space complexity of the functional implementation is O(n). This is, we need additional space that is linearly proportional to the size of the input data. Or maybe not?

Here both Scala and Swift are smart enough to provide mechanisms to avoid this problem:

- Swift returns

Slices orArraySlices when performing operations onArrays that return a chunk of the array (for exampleprefix(_:)orsubscript(_:)with aRange). These are views wrapping an underlying collection that don’t use any additional memory to hold their elements, so they haveO(1)time and space complexity. - Scala has a similar mechanism, although a bit more explicit. You can see it in the code when we invoke

numbers.view: we are creating a view which will wrap the underlying collection, so all the operations will be performed without creating additional structures. In our case we canslicethose arrays (well,IndexedSeqViews to be fair) ad infinitum with the certainty that we will remainO(1)compliant.

Conclusion#

So yes, maybe the title was a bit of a clickbait, although this is not the only reason why I think Swift still sucks as a real functional language. Anyway if you reached this point you likely agree that the functional approach is way better at conveying the original algorithm definition and:

- either you have learnt that Swift isn’t suitable to be used as a real functional language unless you want to ditch one of the most common approaches to implementing functional algorithms (tail recursion)

- or you know more than me regarding tail recursion optimisation in Swift, in which case I’d love to hear you in the comments!