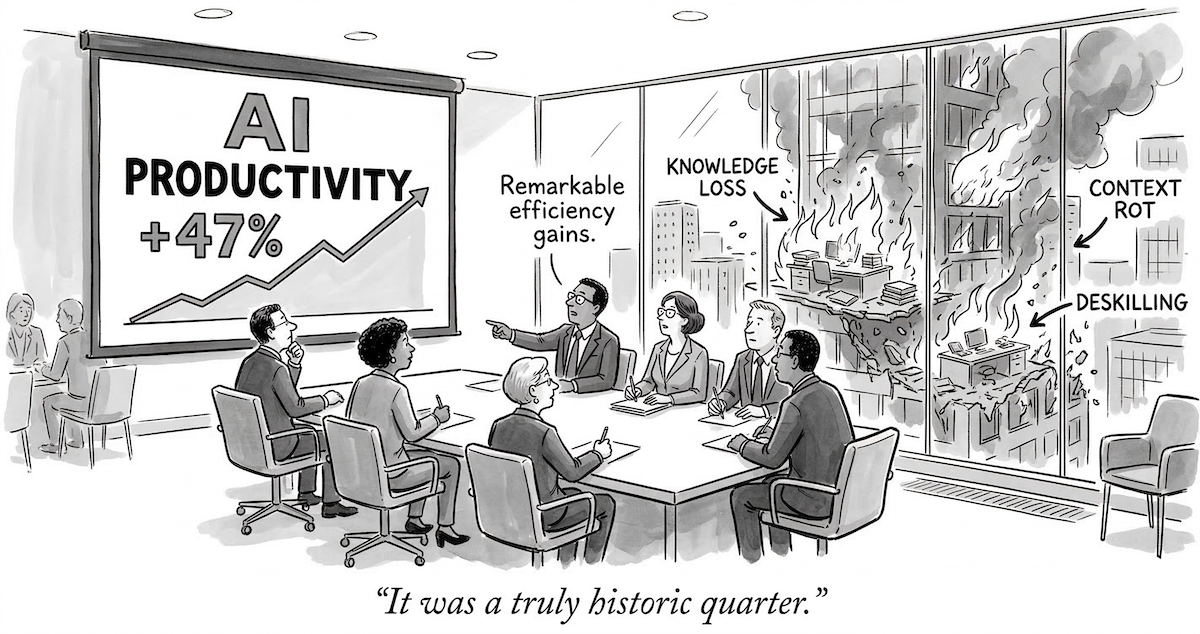

Everyone’s talking about AI productivity. Ship faster. Write more code. Automate the boring stuff. Kill the developers (well, kind of). And sure, that’s real — I’ve seen it myself, having built several Claude Code plugins for my team at Dow Jones. AI genuinely makes you faster.

But here’s the thing: if your entire AI strategy is “make developers more productive,” you’re focusing on one quarter of the picture and ignoring the three quarters that actually matter for the long-term health of your engineering organisation.

Bold claim? Absolutely. Let me earn it.

The knowledge problem nobody talks about#

Before we get to AI, let me ask you something. Think about the best engineer you’ve ever worked with. The one who, when a production incident happens at 2 AM, somehow knows where the problem is before anyone else has finished reading the logs. The one who reviews your pull request and spots the architectural issue you didn’t even know existed.

Now ask yourself: how much of what makes that engineer great is written down somewhere?

If your answer is “not much,” congratulations — you’ve just identified the most valuable and most fragile asset in your organisation. That engineer’s knowledge is tacit: it lives in their head, was built through years of experience, and is extraordinarily hard to articulate. It’s the difference between knowing the syntax of a language and knowing when not to use a particular pattern.

The counterpart is explicit knowledge — the stuff that IS written down. Documentation, runbooks, architecture decision records, onboarding guides. The kind of knowledge you can hand to someone and say “read this.”

The central challenge of any engineering organisation — and this is not a new insight, by the way — is converting the first into the second. Because tacit knowledge, no matter how brilliant, walks out the door when that engineer takes a new job.

A model from 1995 that explains everything#

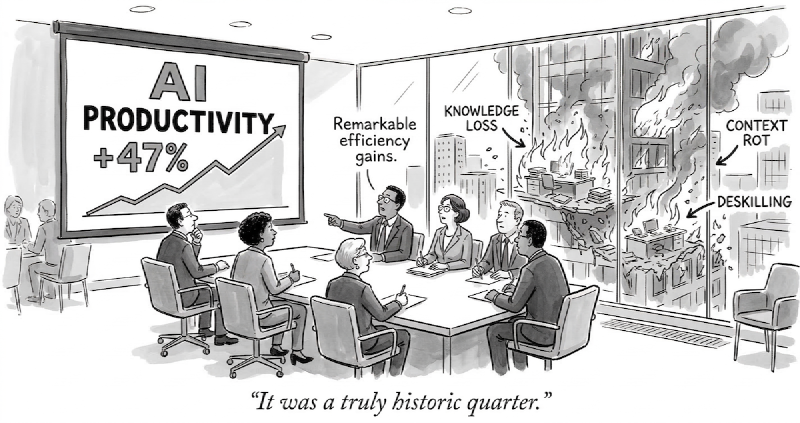

In 1995, Nonaka and Takeuchi published The Knowledge-Creating Company, where they described something called the SECI model (pronounced “SEH-kee,” in case you were wondering — I certainly was). It describes four modes of knowledge conversion that form a continuous spiral:

- Socialization (Tacit → Tacit): you learn by sitting next to an expert. Pair programming, mentoring, the hallway conversation where someone casually drops a gem that saves you three days of debugging.

- Externalization (Tacit → Explicit): someone writes down what they know. Design docs, ADRs, that one Confluence page that actually explains how the payment system works, or that script that automates a repetitive established workflow.

- Combination (Explicit → Explicit): you synthesise scattered knowledge into something structured. Wikis, knowledge bases, that quarterly report that pulls together insights from five different teams.

- Internalization (Explicit → Tacit): you read the docs and, through practice, develop genuine understanding. Not just knowing what the architecture is, but feeling why it has to be that way.

These four modes form a spiral because the output of each phase feeds the next. Tacit knowledge gets externalised, combined with other knowledge, internalised by new people, who then socialise it further through their own practice. Round and round it goes, each turn creating new knowledge that didn’t exist before — which is why Nonaka called it knowledge creation, not knowledge transfer.

You can also think of it as two complementary movements: capture (Externalization + Combination) makes knowledge available to the organisation, while spread (Socialization + Internalization) gets knowledge into people’s heads.

“That’s a nice academic model,” you might say. “But what does it have to do with AI?”

Everything.

AI disrupts every phase (and not equally)#

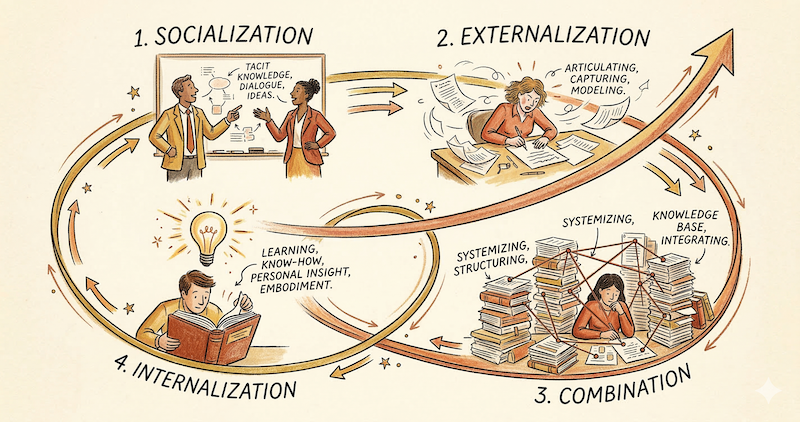

Here’s where it gets interesting. AI — and specifically LLMs — disrupts every single phase of the SECI spiral, but the impact is wildly uneven:

| SECI Phase | AI Role | Impact |

|---|---|---|

| Socialization | AI as synthetic pair partner | Moderate |

| Externalization | AI as articulation partner | Transformative |

| Combination | AI as synthesis engine | High |

| Internalization | AI as practice partner | Mixed |

The biggest impact is on Externalization — the historically hardest conversion. Ask any senior engineer to write down what they know, and you’ll get a mixture of procrastination, vague hand-waving, and a half-finished Confluence page abandoned three months ago. It’s not that they’re lazy (well, maybe, I must admit that I may hate like hell writing documentation, but just may) — it’s that articulating tacit knowledge is genuinely difficult. You know more than you can tell, as Polanyi put it.

AI changes this equation dramatically. You can now think out loud with an LLM, and it helps you structure your vague understanding into coherent, shareable text. It’s like having a patient interviewer who asks the right follow-up questions and turns your stream of consciousness into an architecture decision record. The friction of externalization drops by an order of magnitude.

For Combination, AI serves as a synthesis engine that can search, cross-reference, and recombine explicit knowledge across silos. That information buried in a JIRA ticket from six months ago, connected to a design doc in Confluence, connected to a Slack thread that nobody bookmarked? An AI with the right context can pull those threads together. This is the “boring but transformative” category — not glamorous, but it solves the very real problem of knowledge scattered across fifteen different tools.

For Socialization, AI pair programming creates a new form of working alongside a knowledgeable colleague. It’s moderate because it lacks the richness of genuine human interaction — but it’s available at 2 AM when your actual colleagues are, sensibly, asleep (as you’d probably be too, by the way).

And for Internalization… well, this is where it gets complicated. AI can accelerate learning by explaining concepts, generating exercises, and providing on-demand tutoring. But it can also short-circuit the learning process entirely. If the AI writes the code and you just accept it, you never develop the tacit understanding that makes you effective. More on this in a moment.

The fourfold strategy (or why you’re focusing on the wrong quarter)#

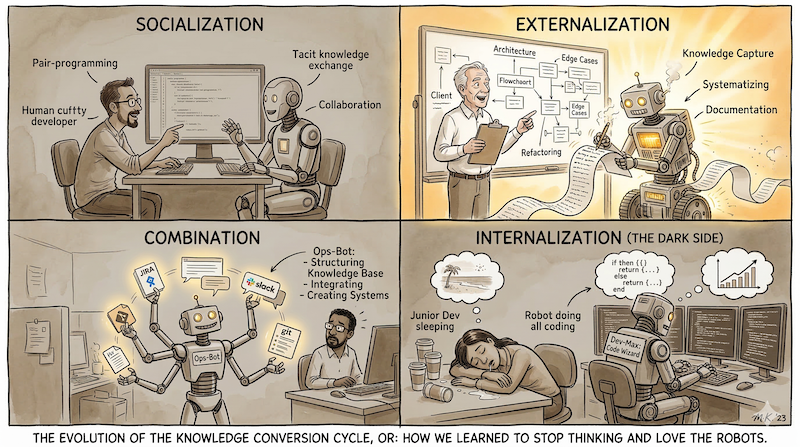

Given how AI disrupts the spiral, I think the strategic response has to be fourfold:

1. Build knowledge infrastructure — create shared tools and agents that encode team expertise and lower friction across both capture and spread. Skills that automate workflows while documenting why those workflows exist. Agents that connect to your wiki, your issue tracker, your codebase, and synthesise across them. This isn’t about a specific tool — it’s about building the infrastructure for the entire SECI spiral to turn faster. This is just the enabling layer for the other three goals — infrastructure without deliberate practice is just an empty pipeline.

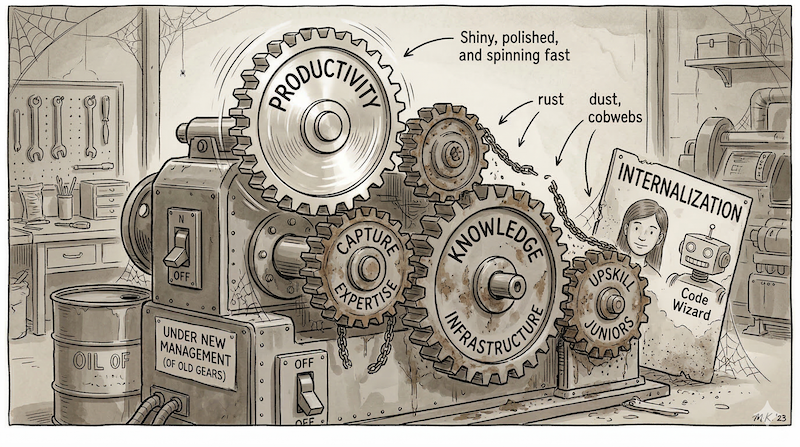

2. Boost productivity — the obvious one. Use AI to synthesise internal and external knowledge to accelerate work. This is where 99% of the conversation is today.

3. Capture senior expertise — use AI as an articulation partner to help senior developers externalise their tacit knowledge. That architect who “just knows” how the system should evolve? Sit them down with an AI and help them turn that intuition into documentation, ADRs, and design principles that outlive their tenure. This is Externalization, and it’s arguably more valuable than productivity — because it creates the knowledge base that makes productivity possible.

4. Upskill junior developers — the most neglected goal, and the one I feel most strongly about. AI should be deliberately used to accelerate Internalization: helping juniors build the deep tacit knowledge they need to become the strong engineers who make AI effective. Not just making them productive today, but growing their actual understanding.

Here’s the uncomfortable truth: almost everyone jumps straight to #2 without building the infrastructure that makes everything else possible, and almost nobody has a strategy for #4. The absence of an upskilling strategy is itself a strategy — it defaults to deskilling.

Lisanne Bainbridge identified this paradox back in 1983 in a paper called Ironies of Automation: the more we automate, the more we depend on highly skilled operators — whose skills are degraded by the automation itself. If juniors never struggle with problems because AI solves them, they never develop the tacit knowledge that makes senior engineers effective. And if we deplete that pipeline, we lose the very capacity to direct AI well.

The four goals are interdependent. Infrastructure (#1) enables everything but is useless without the people to fill it. Productivity (#2) depends on having externalised knowledge to draw from (#3). And upskilling (#4) ensures the pipeline of engineers who can do all three.

The dark side (because of course there is one)#

Every one of these disruptions has a flip side, and I’d be dishonest if I didn’t mention them:

- Hidden explicit knowledge: your tools, agents, and plugins work beautifully — but nobody understands why the decisions embedded in them were made. The workflow rationale becomes invisible once it “just works.” This is Bainbridge’s irony applied to AI infrastructure.

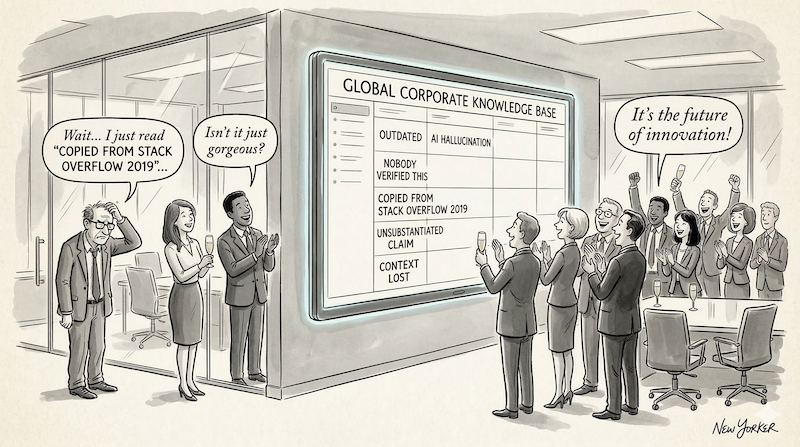

- False externalization: AI produces text that looks like expert knowledge but is actually hallucinated. If that enters your knowledge base unchecked, you now have institutional misinformation that nobody questions. That’s worse than having no knowledge at all.

- Context poisoning: if you build a knowledge system (as I argue you should), bad context compounds just like good context. A stale note about a reversed decision will confidently lead the AI — and your team — astray.

- Knowledge homogenization: if everyone uses the same AI with the same context, you risk everyone converging on a single way of thinking. The SECI spiral needs diverse tacit input; shared AI context can suppress that diversity.

- The curator burden: knowledge systems need maintenance. If nobody reviews, prunes, or updates them, you’ve just moved the graveyard of stale documents from Confluence to a new tool.

The common thread? None of these are solved by technology alone. They all require human judgment, review rituals, and active stewardship. The same engineers you’re trying to boost with AI need to be the ones keeping the knowledge system honest.

So what now?#

If you’ve read this far — first of all, thank you for your patience. Let me leave you with the thought that prompted this entire article.

The question isn’t whether AI will change how knowledge moves through your engineering organisation. It already is. Every time a developer uses an AI assistant, they’re participating in the SECI spiral — creating knowledge, consuming knowledge, sometimes creating false knowledge. This is happening whether you have a strategy for it or not.

The question is whether you shape that deliberately, or let it happen by accident.

A productivity-only AI strategy is like optimising one gear in a four-gear machine. It’ll spin faster, sure. But if the other three gears are rusty, the machine doesn’t move.